### What changes were proposed in this pull request?

This patch adds the functionality to measure records being written for JDBC writer. In reality, the value is meant to be a number of records being updated from queries, as per JDBC spec it will return updated count.

### Why are the changes needed?

Output metrics for JDBC writer are missing now. The value of "bytesWritten" is also missing, but we can't measure it from JDBC API.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Unit test added.

Closes#26109 from HeartSaVioR/SPARK-29461.

Authored-by: Jungtaek Lim (HeartSaVioR) <kabhwan.opensource@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

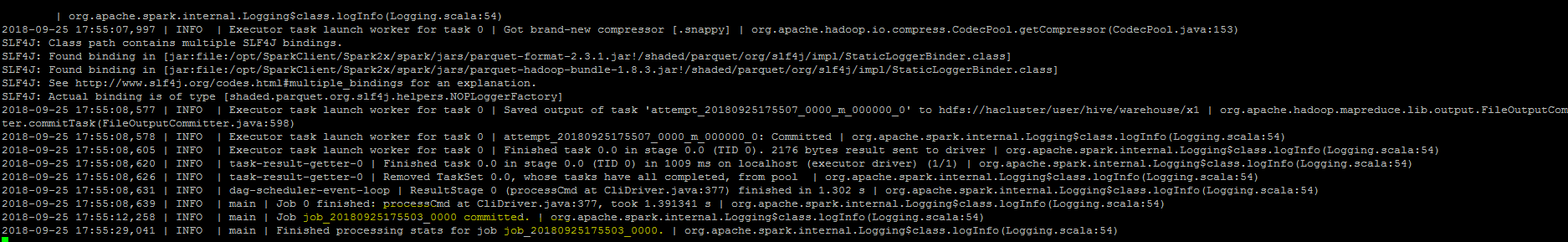

First, a bit of background on the code being changed. The current code tracks

metric updates for each task, recording which metrics the task is monitoring

and the last update value.

Once a SQL execution finishes, then the metrics for all the stages are

aggregated, by building a list with all (metric ID, value) pairs collected

for all tasks in the stages related to the execution, then grouping by metric

ID, and then calculating the values shown in the UI.

That is full of inefficiencies:

- in normal operation, all tasks will be tracking and updating the same

metrics. So recording the metric IDs per task is wasteful.

- tracking by task means we might be double-counting values if you have

speculative tasks (as a comment in the code mentions).

- creating a list of (metric ID, value) is extremely inefficient, because now

you have a huge map in memory storing boxed versions of the metric IDs and

values.

- same thing for the aggregation part, where now a Seq is built with the values

for each metric ID.

The end result is that for large queries, this code can become both really

slow, thus affecting the processing of events, and memory hungry.

The updated code changes the approach to the following:

- stages track metrics by their ID; this means the stage tracking code

naturally groups values, making aggregation later simpler.

- each metric ID being tracked uses a long array matching the number of

partitions of the stage; this means that it's cheap to update the value of

the metric once a task ends.

- when aggregating, custom code just concatenates the arrays corresponding to

the matching metric IDs; this is cheaper than the previous, boxing-heavy

approach.

The end result is that the listener uses about half as much memory as before

for tracking metrics, since it doesn't need to track metric IDs per task.

I captured heap dumps with the old and the new code during metric aggregation

in the listener, for an execution with 3 stages, 100k tasks per stage, 50

metrics updated per task. The dumps contained just reachable memory - so data

kept by the listener plus the variables in the aggregateMetrics() method.

With the old code, the thread doing aggregation references >1G of memory - and

that does not include temporary data created by the "groupBy" transformation

(for which the intermediate state is not referenced in the aggregation method).

The same thread with the new code references ~250M of memory. The old code uses

about ~250M to track all the metric values for that execution, while the new

code uses about ~130M. (Note the per-thread numbers include the amount used to

track the metrics - so, e.g., in the old case, aggregation was referencing

about ~750M of temporary data.)

I'm also including a small benchmark (based on the Benchmark class) so that we

can measure how much changes to this code affect performance. The benchmark

contains some extra code to measure things the normal Benchmark class does not,

given that the code under test does not really map that well to the

expectations of that class.

Running with the old code (I removed results that don't make much

sense for this benchmark):

```

[info] Java HotSpot(TM) 64-Bit Server VM 1.8.0_181-b13 on Linux 4.15.0-66-generic

[info] Intel(R) Core(TM) i7-6820HQ CPU 2.70GHz

[info] metrics aggregation (50 metrics, 100k tasks per stage): Best Time(ms) Avg Time(ms)

[info] --------------------------------------------------------------------------------------

[info] 1 stage(s) 2113 2118

[info] 2 stage(s) 4172 4392

[info] 3 stage(s) 7755 8460

[info]

[info] Stage Count Stage Proc. Time Aggreg. Time

[info] 1 614 1187

[info] 2 620 2480

[info] 3 718 5069

```

With the new code:

```

[info] Java HotSpot(TM) 64-Bit Server VM 1.8.0_181-b13 on Linux 4.15.0-66-generic

[info] Intel(R) Core(TM) i7-6820HQ CPU 2.70GHz

[info] metrics aggregation (50 metrics, 100k tasks per stage): Best Time(ms) Avg Time(ms)

[info] --------------------------------------------------------------------------------------

[info] 1 stage(s) 727 886

[info] 2 stage(s) 1722 1983

[info] 3 stage(s) 2752 3013

[info]

[info] Stage Count Stage Proc. Time Aggreg. Time

[info] 1 408 177

[info] 2 389 423

[info] 3 372 660

```

So the new code is faster than the old when processing task events, and about

an order of maginute faster when aggregating metrics.

Note this still leaves room for improvement; for example, using the above

measurements, 600ms is still a huge amount of time to spend in an event

handler. But I'll leave further enhancements for a separate change.

Tested with benchmarking code + existing unit tests.

Closes#26218 from vanzin/SPARK-29562.

Authored-by: Marcelo Vanzin <vanzin@cloudera.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Track timing info for each rule in optimization phase using `QueryPlanningTracker` in Structured Streaming

### Why are the changes needed?

In Structured Streaming we only track rule info in analysis phase, not in optimization phase.

### Does this PR introduce any user-facing change?

No

Closes#25914 from wenxuanguan/spark-29227.

Authored-by: wenxuanguan <choose_home@126.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Add UncacheTableStatement and make UNCACHE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

UNCACHE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running UNCACHE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

New unit tests

Closes#26237 from imback82/uncache_table.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Remove the requirement of fetch_size>=0 from JDBCOptions to allow negative fetch size.

### Why are the changes needed?

Namely, to allow data fetch in stream manner (row-by-row fetch) against MySQL database.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Unit test (JDBCSuite)

This closes#26230 .

Closes#26244 from fuwhu/SPARK-21287-FIX.

Authored-by: fuwhu <bestwwg@163.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

# What changes were proposed in this pull request?

Add description for ignoreNullFields, which is commited in #26098 , in DataFrameWriter and readwriter.py.

Enable user to use ignoreNullFields in pyspark.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

run unit tests

Closes#26227 from stczwd/json-generator-doc.

Authored-by: stczwd <qcsd2011@163.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Only use antlr4 to parse the interval string, and remove the duplicated parsing logic from `CalendarInterval`.

### Why are the changes needed?

Simplify the code and fix inconsistent behaviors.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Pass the Jenkins with the updated test cases.

Closes#26190 from cloud-fan/parser.

Lead-authored-by: Wenchen Fan <wenchen@databricks.com>

Co-authored-by: Dongjoon Hyun <dongjoon@apache.org>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Support SparkSQL use iN/EXISTS with subquery in JOIN condition.

### Why are the changes needed?

Support SQL use iN/EXISTS with subquery in JOIN condition.

### Does this PR introduce any user-facing change?

This PR is for enable user use subquery in `JOIN`'s ON condition. such as we have create three table

```

CREATE TABLE A(id String);

CREATE TABLE B(id String);

CREATE TABLE C(id String);

```

we can do query like :

```

SELECT A.id from A JOIN B ON A.id = B.id and A.id IN (select C.id from C)

```

### How was this patch tested?

ADDED UT

Closes#25854 from AngersZhuuuu/SPARK-29145.

Lead-authored-by: angerszhu <angers.zhu@gmail.com>

Co-authored-by: AngersZhuuuu <angers.zhu@gmail.com>

Signed-off-by: Takeshi Yamamuro <yamamuro@apache.org>

### What changes were proposed in this pull request?

Reimplement the iterator in UnsafeExternalRowSorter in database style. This can be done by reusing the `RowIterator` in our code base.

### Why are the changes needed?

During the job in #26164, after involving a var `isReleased` in `hasNext`, there's possible that `isReleased` is false when calling `hasNext`, but it becomes true before calling `next`. A safer way is using database-style iterator: `advanceNext` and `getRow`.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UT.

Closes#26229 from xuanyuanking/SPARK-21492-follow-up.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add CacheTableStatement and make CACHE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

CACHE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running CACHE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26179 from viirya/SPARK-29522.

Lead-authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Co-authored-by: Liang-Chi Hsieh <liangchi@uber.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This is a follow-up of #24052 to correct assert condition.

### Why are the changes needed?

To test IllegalArgumentException condition..

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Manual Test (during fixing of SPARK-29453 find this issue)

Closes#26234 from 07ARB/SPARK-29571.

Authored-by: 07ARB <ankitrajboudh@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

This is a follow-up of https://github.com/apache/spark/pull/26189 to regenerate the result on EC2.

### Why are the changes needed?

This will be used for the other PR reviews.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

N/A.

Closes#26233 from dongjoon-hyun/SPARK-29533.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: DB Tsai <d_tsai@apple.com>

### What changes were proposed in this pull request?

`OptimizeLocalShuffleReader` rule is very conservative and gives up optimization as long as there are extra shuffles introduced. It's very likely that most of the added local shuffle readers are fine and only one introduces extra shuffle.

However, it's very hard to make `OptimizeLocalShuffleReader` optimal, a simple workaround is to run this rule again right before executing a query stage.

### Why are the changes needed?

Optimize more shuffle reader to local shuffle reader.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing ut

Closes#26207 from JkSelf/resolve-multi-joins-issue.

Authored-by: jiake <ke.a.jia@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add RefreshTableStatement and make REFRESH TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

REFRESH TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running REFRESH TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

New unit tests

Closes#26183 from imback82/refresh_table.

Lead-authored-by: Terry Kim <yuminkim@gmail.com>

Co-authored-by: Terry Kim <terryk@terrys-mbp-2.lan>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

This moves the tracking of active queries from a per SparkSession state, to the shared SparkSession for better safety in isolated Spark Session environments.

### Why are the changes needed?

We have checks to prevent the restarting of the same stream on the same spark session, but we can actually make that better in multi-tenant environments by actually putting that state in the SharedState instead of SessionState. This would allow a more comprehensive check for multi-tenant clusters.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Added tests to StreamingQueryManagerSuite

Closes#26018 from brkyvz/sharedStreamingQueryManager.

Lead-authored-by: Burak Yavuz <burak@databricks.com>

Co-authored-by: Burak Yavuz <brkyvz@gmail.com>

Signed-off-by: Burak Yavuz <brkyvz@gmail.com>

### What changes were proposed in this pull request?

This PR adds `CREATE NAMESPACE` support for V2 catalogs.

### Why are the changes needed?

Currently, you cannot explicitly create namespaces for v2 catalogs.

### Does this PR introduce any user-facing change?

The user can now perform the following:

```SQL

CREATE NAMESPACE mycatalog.ns

```

to create a namespace `ns` inside `mycatalog` V2 catalog.

### How was this patch tested?

Added unit tests.

Closes#26166 from imback82/create_namespace.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add ShowPartitionsStatement and make SHOW PARTITIONS go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users.

### Does this PR introduce any user-facing change?

Yes. When running SHOW PARTITIONS, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26198 from huaxingao/spark-29539.

Authored-by: Huaxin Gao <huaxing@us.ibm.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

Add TruncateTableStatement and make TRUNCATE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

TRUNCATE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running TRUNCATE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

Unit tests.

Closes#26174 from viirya/SPARK-29517.

Authored-by: Liang-Chi Hsieh <viirya@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

We shall have a new mechanism that the downstream operators may notify its parents that they may release the output data stream. In this PR, we implement the mechanism as below:

- Add function named `cleanupResources` in SparkPlan, which default call children's `cleanupResources` function, the operator which need a resource cleanup should rewrite this with the self cleanup and also call `super.cleanupResources`, like SortExec in this PR.

- Add logic support on the trigger side, in this PR is SortMergeJoinExec, which make sure and call the `cleanupResources` to do the cleanup job for all its upstream(children) operator.

### Why are the changes needed?

Bugfix for SortMergeJoin memory leak, and implement a general framework for SparkPlan resource cleanup.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

UT: Add new test suite JoinWithResourceCleanSuite to check both standard and code generation scenario.

Integrate Test: Test with driver/executor default memory set 1g, local mode 10 thread. The below test(thanks taosaildrone for providing this test [here](https://github.com/apache/spark/pull/23762#issuecomment-463303175)) will pass with this PR.

```

from pyspark.sql.functions import rand, col

spark.conf.set("spark.sql.join.preferSortMergeJoin", "true")

spark.conf.set("spark.sql.autoBroadcastJoinThreshold", -1)

# spark.conf.set("spark.sql.sortMergeJoinExec.eagerCleanupResources", "true")

r1 = spark.range(1, 1001).select(col("id").alias("timestamp1"))

r1 = r1.withColumn('value', rand())

r2 = spark.range(1000, 1001).select(col("id").alias("timestamp2"))

r2 = r2.withColumn('value2', rand())

joined = r1.join(r2, r1.timestamp1 == r2.timestamp2, "inner")

joined = joined.coalesce(1)

joined.explain()

joined.show()

```

Closes#26164 from xuanyuanking/SPARK-21492.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

As I have comment in [SPARK-29516](https://github.com/apache/spark/pull/26172#issuecomment-544364977)

SparkSession.sql() method parse process not under current sparksession's conf, so some configuration about parser is not valid in multi-thread situation.

In this pr, we add a SQLConf parameter to AbstractSqlParser and initial it with SessionState's conf.

Then for each SparkSession's parser process. It will use's it's own SessionState's SQLConf and to be thread safe

### Why are the changes needed?

Fix bug

### Does this PR introduce any user-facing change?

NO

### How was this patch tested?

NO

Closes#26187 from AngersZhuuuu/SPARK-29530.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Only invoke `checkAndGlobPathIfNecessary()` when we have to use `InMemoryFileIndex`.

### Why are the changes needed?

Avoid unnecessary function invocation.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Pass Jenkins.

Closes#26196 from Ngone51/dev-avoid-unnecessary-invocation-on-globpath.

Authored-by: wuyi <ngone_5451@163.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

I extended `ExtractBenchmark` to support the `INTERVAL` type of the `source` parameter of the `date_part` function.

### Why are the changes needed?

- To detect performance issues while changing implementation of the `date_part` function in the future.

- To find out current performance bottlenecks in `date_part` for the `INTERVAL` type

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running the benchmark and print out produced values per each `field` value.

Closes#26175 from MaxGekk/extract-interval-benchmark.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Added new benchmark `IntervalBenchmark` to measure performance of interval related functions. In the PR, I added benchmarks for casting strings to interval. In particular, interval strings with `interval` prefix and without it because there is special code for this da576a737c/common/unsafe/src/main/java/org/apache/spark/unsafe/types/CalendarInterval.java (L100-L103) . And also I added benchmarks for different number of units in interval strings, for example 1 unit is `interval 10 years`, 2 units w/o interval is `10 years 5 months`, and etc.

### Why are the changes needed?

- To find out current performance issues in casting to intervals

- The benchmark can be used while refactoring/re-implementing `CalendarInterval.fromString()` or `CalendarInterval.fromCaseInsensitiveString()`.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

By running the benchmark via the command:

```shell

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.IntervalBenchmark"

```

Closes#26189 from MaxGekk/interval-from-string-benchmark.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

```

bit_and(expression) -- The bitwise AND of all non-null input values, or null if none

bit_or(expression) -- The bitwise OR of all non-null input values, or null if none

```

More details:

https://www.postgresql.org/docs/9.3/functions-aggregate.html

### Why are the changes needed?

Postgres, Mysql and many other popular db support them.

### Does this PR introduce any user-facing change?

add two bit agg

### How was this patch tested?

add ut

Closes#26155 from yaooqinn/SPARK-27879.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR fix Fix the associated location already exists in `SQLQueryTestSuite`:

```

build/sbt "~sql/test-only *SQLQueryTestSuite -- -z postgreSQL/join.sql"

...

[info] - postgreSQL/join.sql *** FAILED *** (35 seconds, 420 milliseconds)

[info] postgreSQL/join.sql

[info] Expected "[]", but got "[org.apache.spark.sql.AnalysisException

[info] Can not create the managed table('`default`.`tt3`'). The associated location('file:/root/spark/sql/core/spark-warehouse/org.apache.spark.sql.SQLQueryTestSuite/tt3') already exists.;]" Result did not match for query #108

```

### Why are the changes needed?

Fix bug.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

N/A

Closes#26181 from wangyum/TestError.

Authored-by: Yuming Wang <yumwang@ebay.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Add RepairTableStatement and make REPAIR TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

MSCK REPAIR TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running MSCK REPAIR TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

New unit tests

Closes#26168 from imback82/repair_table.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Liang-Chi Hsieh <liangchi@uber.com>

### What changes were proposed in this pull request?

Current Spark SQL `SHOW FUNCTIONS` don't show `!=`, `<>`, `between`, `case`

But these expressions is truly functions. We should show it in SQL `SHOW FUNCTIONS`

### Why are the changes needed?

SHOW FUNCTIONS show '!=', '<>' , 'between', 'case'

### Does this PR introduce any user-facing change?

SHOW FUNCTIONS show '!=', '<>' , 'between', 'case'

### How was this patch tested?

UT

Closes#26053 from AngersZhuuuu/SPARK-29379.

Authored-by: angerszhu <angers.zhu@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The `date_part()` function can accept the `source` parameter of the `INTERVAL` type (`CalendarIntervalType`). The following values of the `field` parameter are supported:

- `"MILLENNIUM"` (`"MILLENNIA"`, `"MIL"`, `"MILS"`) - number of millenniums in the given interval. It is `YEAR / 1000`.

- `"CENTURY"` (`"CENTURIES"`, `"C"`, `"CENT"`) - number of centuries in the interval calculated as `YEAR / 100`.

- `"DECADE"` (`"DECADES"`, `"DEC"`, `"DECS"`) - decades in the `YEAR` part of the interval calculated as `YEAR / 10`.

- `"YEAR"` (`"Y"`, `"YEARS"`, `"YR"`, `"YRS"`) - years in a values of `CalendarIntervalType`. It is `MONTHS / 12`.

- `"QUARTER"` (`"QTR"`) - a quarter of year calculated as `MONTHS / 3 + 1`

- `"MONTH"` (`"MON"`, `"MONS"`, `"MONTHS"`) - the months part of the interval calculated as `CalendarInterval.months % 12`

- `"DAY"` (`"D"`, `"DAYS"`) - total number of days in `CalendarInterval.microseconds`

- `"HOUR"` (`"H"`, `"HOURS"`, `"HR"`, `"HRS"`) - the hour part of the interval.

- `"MINUTE"` (`"M"`, `"MIN"`, `"MINS"`, `"MINUTES"`) - the minute part of the interval.

- `"SECOND"` (`"S"`, `"SEC"`, `"SECONDS"`, `"SECS"`) - the seconds part with fractional microsecond part.

- `"MILLISECONDS"` (`"MSEC"`, `"MSECS"`, `"MILLISECON"`, `"MSECONDS"`, `"MS"`) - the millisecond part of the interval with fractional microsecond part.

- `"MICROSECONDS"` (`"USEC"`, `"USECS"`, `"USECONDS"`, `"MICROSECON"`, `"US"`) - the total number of microseconds in the `second`, `millisecond` and `microsecond` parts of the given interval.

- `"EPOCH"` - the total number of seconds in the interval including the fractional part with microsecond precision. Here we assume 365.25 days per year (leap year every four years).

For example:

```sql

> SELECT date_part('days', interval 1 year 10 months 5 days);

5

> SELECT date_part('seconds', interval 30 seconds 1 milliseconds 1 microseconds);

30.001001

```

### Why are the changes needed?

To maintain feature parity with PostgreSQL (https://www.postgresql.org/docs/11/functions-datetime.html#FUNCTIONS-DATETIME-EXTRACT)

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

- Added new test suite `IntervalExpressionsSuite`

- Add new test cases to `date_part.sql`

Closes#25981 from MaxGekk/extract-from-intervals.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

A followup of [#25295](https://github.com/apache/spark/pull/25295).

1) change the logWarning to logDebug in `OptimizeLocalShuffleReader`.

2) update the test to check whether query stage reuse can work well with local shuffle reader.

### Why are the changes needed?

make code robust

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

existing tests

Closes#26157 from JkSelf/followup-25295.

Authored-by: jiake <ke.a.jia@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

The handling of the catalog across plans should be as follows ([SPARK-29014](https://issues.apache.org/jira/browse/SPARK-29014)):

* The *current* catalog should be used when no catalog is specified

* The default catalog is the catalog *current* is initialized to

* If the *default* catalog is not set, then *current* catalog is the built-in Spark session catalog.

This PR addresses the issue where *current* catalog usage is not followed as describe above.

### Why are the changes needed?

It is a bug as described in the previous section.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Unit tests added.

Closes#26120 from imback82/cleanup_catalog.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add `AnalyzeTableStatement` and `AnalyzeColumnStatement`, and make ANALYZE TABLE go through the same catalog/table resolution framework of v2 commands.

### Why are the changes needed?

It's important to make all the commands have the same table resolution behavior, to avoid confusing end-users. e.g.

```

USE my_catalog

DESC t // success and describe the table t from my_catalog

ANALYZE TABLE t // report table not found as there is no table t in the session catalog

```

### Does this PR introduce any user-facing change?

yes. When running ANALYZE TABLE, Spark fails the command if the current catalog is set to a v2 catalog, or the table name specified a v2 catalog.

### How was this patch tested?

new tests

Closes#26129 from cloud-fan/analyze-table.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Gengliang Wang <gengliang.wang@databricks.com>

### What changes were proposed in this pull request?

This PR proposes a few typos:

1. Sparks => Spark's

2. parallize => parallelize

3. doesnt => doesn't

Closes#26140 from plusplusjiajia/fix-typos.

Authored-by: Jiajia Li <jiajia.li@intel.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

BIT_COUNT(N) - Returns the number of bits that are set in the argument N as an unsigned 64-bit integer, or NULL if the argument is NULL

### Why are the changes needed?

Supported by MySQL,Microsoft SQL Server ,etc.

### Does this PR introduce any user-facing change?

add a built-in function

### How was this patch tested?

add uts

Closes#26139 from yaooqinn/SPARK-29491.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

This PR takes over #19788. After we split the shuffle fetch protocol from `OpenBlock` in #24565, this optimization can be extended in the new shuffle protocol. Credit to yucai, closes#19788.

### What changes were proposed in this pull request?

This PR adds the support for continuous shuffle block fetching in batch:

- Shuffle client changes:

- Add new feature tag `spark.shuffle.fetchContinuousBlocksInBatch`, implement the decision logic in `BlockStoreShuffleReader`.

- Merge the continuous shuffle block ids in batch if needed in ShuffleBlockFetcherIterator.

- Shuffle server changes:

- Add support in `ExternalBlockHandler` for the external shuffle service side.

- Make `ShuffleBlockResolver.getBlockData` accept getting block data by range.

- Protocol changes:

- Add new block id type `ShuffleBlockBatchId` represent continuous shuffle block ids.

- Extend `FetchShuffleBlocks` and `OneForOneBlockFetcher`.

- After the new shuffle fetch protocol completed in #24565, the backward compatibility for external shuffle service can be controlled by `spark.shuffle.useOldFetchProtocol`.

### Why are the changes needed?

In adaptive execution, one reducer may fetch multiple continuous shuffle blocks from one map output file. However, as the original approach, each reducer needs to fetch those 10 reducer blocks one by one. This way needs many IO and impacts performance. This PR is to support fetching those continuous shuffle blocks in one IO (batch way). See below example:

The shuffle block is stored like below:

The ShuffleId format is s"shuffle_$shuffleId_$mapId_$reduceId", referring to BlockId.scala.

In adaptive execution, one reducer may want to read output for reducer 5 to 14, whose block Ids are from shuffle_0_x_5 to shuffle_0_x_14.

Before this PR, Spark needs 10 disk IOs + 10 network IOs for each output file.

After this PR, Spark only needs 1 disk IO and 1 network IO. This way can reduce IO dramatically.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Add new UT.

Integrate test with setting `spark.sql.adaptive.enabled=true`.

Closes#26040 from xuanyuanking/SPARK-9853.

Lead-authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Co-authored-by: yucai <yyu1@ebay.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

bool_or(x) <=> any/some(x) <=> max(x)

bool_and(x) <=> every(x) <=> min(x)

Args:

x: boolean

### Why are the changes needed?

PostgreSQL, Presto and Vertica, etc also support this feature:

### Does this PR introduce any user-facing change?

add new functions support

### How was this patch tested?

add ut

Closes#26126 from yaooqinn/SPARK-27880.

Authored-by: Kent Yao <yaooqinn@hotmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Proposed new expression `SubtractDates` which is used in `date1` - `date2`. It has the `INTERVAL` type, and returns the interval from `date1` (inclusive) and `date2` (exclusive). For example:

```sql

> select date'tomorrow' - date'yesterday';

interval 2 days

```

Closes#26034

### Why are the changes needed?

- To conform the SQL standard which states the result type of `date operand 1` - `date operand 2` must be the interval type. See [4.5.3 Operations involving datetimes and intervals](http://www.contrib.andrew.cmu.edu/~shadow/sql/sql1992.txt).

- Improve Spark SQL UX and allow mixing date and timestamp in subtractions. For example: `select timestamp'now' + (date'2019-10-01' - date'2019-09-15')`

### Does this PR introduce any user-facing change?

Before the query below returns number of days:

```sql

spark-sql> select date'2019-10-05' - date'2018-09-01';

399

```

After it returns an interval:

```sql

spark-sql> select date'2019-10-05' - date'2018-09-01';

interval 1 years 1 months 4 days

```

### How was this patch tested?

- by new tests in `DateExpressionsSuite` and `TypeCoercionSuite`.

- by existing tests in `date.sql`

Closes#26112 from MaxGekk/date-subtract.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

A followup of https://github.com/apache/spark/pull/25295

This PR proposes a few code cleanups:

1. rename the special `getMapSizesByExecutorId` to `getMapSizesByMapIndex`

2. rename the parameter `mapId` to `mapIndex` as that's really a mapper index.

3. `BlockStoreShuffleReader` should take `blocksByAddress` directly instead of a map id.

4. rename `getMapReader` to `getReaderForOneMapper` to be more clearer.

### Why are the changes needed?

make code easier to understand

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26128 from cloud-fan/followup.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

Updating univocity-parsers version to 2.8.3, which adds support for multiple character delimiters

Moving univocity-parsers version to spark-parent pom dependencyManagement section

Adding new utility method to build multi-char delimiter string, which delegates to existing one

Adding tests for multiple character delimited CSV

### What changes were proposed in this pull request?

Adds support for parsing CSV data using multiple-character delimiters. Existing logic for converting the input delimiter string to characters was kept and invoked in a loop. Project dependencies were updated to remove redundant declaration of `univocity-parsers` version, and also to change that version to the latest.

### Why are the changes needed?

It is quite common for people to have delimited data, where the delimiter is not a single character, but rather a sequence of characters. Currently, it is difficult to handle such data in Spark (typically needs pre-processing).

### Does this PR introduce any user-facing change?

Yes. Specifying the "delimiter" option for the DataFrame read, and providing more than one character, will no longer result in an exception. Instead, it will be converted as before and passed to the underlying library (Univocity), which has accepted multiple character delimiters since 2.8.0.

### How was this patch tested?

The `CSVSuite` tests were confirmed passing (including new methods), and `sbt` tests for `sql` were executed.

Closes#26027 from jeff303/SPARK-24540.

Authored-by: Jeff Evans <jeffrey.wayne.evans@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

When inserting a value into a column with the different data type, Spark performs type coercion. Currently, we support 3 policies for the store assignment rules: ANSI, legacy and strict, which can be set via the option "spark.sql.storeAssignmentPolicy":

1. ANSI: Spark performs the type coercion as per ANSI SQL. In practice, the behavior is mostly the same as PostgreSQL. It disallows certain unreasonable type conversions such as converting `string` to `int` and `double` to `boolean`. It will throw a runtime exception if the value is out-of-range(overflow).

2. Legacy: Spark allows the type coercion as long as it is a valid `Cast`, which is very loose. E.g., converting either `string` to `int` or `double` to `boolean` is allowed. It is the current behavior in Spark 2.x for compatibility with Hive. When inserting an out-of-range value to a integral field, the low-order bits of the value is inserted(the same as Java/Scala numeric type casting). For example, if 257 is inserted to a field of Byte type, the result is 1.

3. Strict: Spark doesn't allow any possible precision loss or data truncation in store assignment, e.g., converting either `double` to `int` or `decimal` to `double` is allowed. The rules are originally for Dataset encoder. As far as I know, no mainstream DBMS is using this policy by default.

Currently, the V1 data source uses "Legacy" policy by default, while V2 uses "Strict". This proposal is to use "ANSI" policy by default for both V1 and V2 in Spark 3.0.

### Why are the changes needed?

Following the ANSI SQL standard is most reasonable among the 3 policies.

### Does this PR introduce any user-facing change?

Yes.

The default store assignment policy is ANSI for both V1 and V2 data sources.

### How was this patch tested?

Unit test

Closes#26107 from gengliangwang/ansiPolicyAsDefault.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

## What changes were proposed in this pull request?

Implement a rule in the new adaptive execution framework introduced in [SPARK-23128](https://issues.apache.org/jira/browse/SPARK-23128). This rule is used to optimize the shuffle reader to local shuffle reader when smj is converted to bhj in adaptive execution.

## How was this patch tested?

Existing tests

Closes#25295 from JkSelf/localShuffleOptimization.

Authored-by: jiake <ke.a.jia@intel.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

move the statement logical plans that were created for v2 commands to a new file `statements.scala`, under the same package of `v2Commands.scala`.

This PR also includes some minor cleanups:

1. remove `private[sql]` from `ParsedStatement` as it's in the private package.

2. remove unnecessary override of `output` and `children`.

3. add missing classdoc.

### Why are the changes needed?

Similar to https://github.com/apache/spark/pull/26111 , this is to better organize the logical plans of data source v2.

It's a bit weird to put the statements in the package `org.apache.spark.sql.catalyst.plans.logical.sql` as `sql` is not a good sub-package name in Spark SQL.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#26125 from cloud-fan/statement.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

There will be 2 times unsafeProjection convert operation When we read a Parquet data file use non-vectorized mode:

1. `ParquetGroupConverter` call unsafeProjection function to covert `SpecificInternalRow` to `UnsafeRow` every times when read Parquet data file use `ParquetRecordReader`.

2. `ParquetFileFormat` will call unsafeProjection function to covert this `UnsafeRow` to another `UnsafeRow` again when partitionSchema is not empty in DataSourceV1 branch, and `PartitionReaderWithPartitionValues` will always do this convert operation in DataSourceV2 branch.

In this pr, remove `unsafeProjection` convert operation in `ParquetGroupConverter` and change `ParquetRecordReader` to produce `SpecificInternalRow` instead of `UnsafeRow`.

### Why are the changes needed?

The first time convert in `ParquetGroupConverter` is redundant and `ParquetRecordReader` return a `InternalRow(SpecificInternalRow)` is enough.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Unit Test

Closes#26106 from LuciferYang/spark-parquet-unsafe-projection.

Authored-by: yangjie01 <yangjie01@baidu.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Refine the document of v2 session catalog config, to clearly explain what it is, when it should be used and how to implement it.

### Why are the changes needed?

Make this config more understandable

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Pass the Jenkins with the newly updated test cases.

Closes#26071 from cloud-fan/config.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR aims to fix the behavior of `mode("default")` to set `SaveMode.ErrorIfExists`. Also, this PR updates the exception message by adding `default` explicitly.

### Why are the changes needed?

This is reported during `GRAPH API` PR. This builder pattern should work like the documentation.

### Does this PR introduce any user-facing change?

Yes if the app has multiple `mode()` invocation including `mode("default")` and the `mode("default")` is the last invocation. This is really a corner case.

- Previously, the last invocation was handled as `No-Op`.

- After this bug fix, it will work like the documentation.

### How was this patch tested?

Pass the Jenkins with the newly added test case.

Closes#26094 from dongjoon-hyun/SPARK-29442.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

In the PR, I propose to move interval parsing to `CalendarInterval.fromCaseInsensitiveString()` which throws an `IllegalArgumentException` for invalid strings, and reuse it from `CalendarInterval.fromString()`. The former one handles `IllegalArgumentException` only and returns `NULL` for invalid interval strings. This will allow to support interval strings without the `interval` prefix in casting strings to intervals and in interval type constructor because they use `fromString()` for parsing string intervals.

For example:

```sql

spark-sql> select cast('1 year 10 days' as interval);

interval 1 years 1 weeks 3 days

spark-sql> SELECT INTERVAL '1 YEAR 10 DAYS';

interval 1 years 1 weeks 3 days

```

### Why are the changes needed?

To maintain feature parity with PostgreSQL which supports interval strings without prefix:

```sql

# select interval '2 months 1 microsecond';

interval

------------------------

2 mons 00:00:00.000001

```

and to improve Spark SQL UX.

### Does this PR introduce any user-facing change?

Yes, previously parsing of interval strings without `interval` gives `NULL`:

```sql

spark-sql> select interval '2 months 1 microsecond';

NULL

```

After:

```sql

spark-sql> select interval '2 months 1 microsecond';

interval 2 months 1 microseconds

```

### How was this patch tested?

- Added new tests to `CalendarIntervalSuite.java`

- A test for casting strings to intervals in `CastSuite`

- Test for interval type constructor from strings in `ExpressionParserSuite`

Closes#26079 from MaxGekk/interval-str-without-prefix.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Currently, `SHOW NAMESPACES` and `SHOW DATABASES` are separate code paths. This PR merges two implementations.

### Why are the changes needed?

To remove code/behavior duplication

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Added new unit tests.

Closes#26006 from imback82/combine_show.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

This PR adds 2 changes regarding exception handling in `SQLQueryTestSuite` and `ThriftServerQueryTestSuite`

- fixes an expected output sorting issue in `ThriftServerQueryTestSuite` as if there is an exception then there is no need for sort

- introduces common exception handling in those 2 suites with a new `handleExceptions` method

### Why are the changes needed?

Currently `ThriftServerQueryTestSuite` passes on master, but it fails on one of my PRs (https://github.com/apache/spark/pull/23531) with this error (https://amplab.cs.berkeley.edu/jenkins/job/SparkPullRequestBuilder/111651/testReport/org.apache.spark.sql.hive.thriftserver/ThriftServerQueryTestSuite/sql_3/):

```

org.scalatest.exceptions.TestFailedException: Expected "

[Recursion level limit 100 reached but query has not exhausted, try increasing spark.sql.cte.recursion.level.limit

org.apache.spark.SparkException]

", but got "

[org.apache.spark.SparkException

Recursion level limit 100 reached but query has not exhausted, try increasing spark.sql.cte.recursion.level.limit]

" Result did not match for query #4 WITH RECURSIVE r(level) AS ( VALUES (0) UNION ALL SELECT level + 1 FROM r ) SELECT * FROM r

```

The unexpected reversed order of expected output (error message comes first, then the exception class) is due to this line: https://github.com/apache/spark/pull/26028/files#diff-b3ea3021602a88056e52bf83d8782de8L146. It should not sort the expected output if there was an error during execution.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UTs.

Closes#26028 from peter-toth/SPARK-29359-better-exception-handling.

Authored-by: Peter Toth <peter.toth@gmail.com>

Signed-off-by: Yuming Wang <wgyumg@gmail.com>

### What changes were proposed in this pull request?

Revert this commit 18b7ad2fc5.

### Why are the changes needed?

See https://github.com/apache/spark/pull/16304#discussion_r92753590

### Does this PR introduce any user-facing change?

Yes

### How was this patch tested?

There is no test for that.

Closes#26101 from MaxGekk/revert-mean-seconds-per-month.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Use `.sameElements` to compare (non-nested) arrays, as `Arrays.deep` is removed in 2.13 and wasn't the best way to do this in the first place.

### Why are the changes needed?

To compile with 2.13.

### Does this PR introduce any user-facing change?

None.

### How was this patch tested?

Existing tests.

Closes#26073 from srowen/SPARK-29416.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Replace `Unit` with equivalent `()` where code refers to the `Unit` companion object.

### Why are the changes needed?

It doesn't compile otherwise in Scala 2.13.

- https://github.com/scala/scala/blob/v2.13.0/src/library/scala/Unit.scala#L30

### Does this PR introduce any user-facing change?

Should be no behavior change at all.

### How was this patch tested?

Existing tests.

Closes#26070 from srowen/SPARK-29411.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR adds an accumulator that computes a global aggregate over a number of rows. A user can define an arbitrary number of aggregate functions which can be computed at the same time.

The accumulator uses the standard technique for implementing (interpreted) aggregation in Spark. It uses projections and manual updates for each of the aggregation steps (initialize buffer, update buffer with new input row, merge two buffers and compute the final result on the buffer). Note that two of the steps (update and merge) use the aggregation buffer both as input and output.

Accumulators do not have an explicit point at which they get serialized. A somewhat surprising side effect is that the buffers of a `TypedImperativeAggregate` go over the wire as-is instead of serializing them. The merging logic for `TypedImperativeAggregate` assumes that the input buffer contains serialized buffers, this is violated by the accumulator's implicit serialization. In order to get around this I have added `mergeBuffersObjects` method that merges two unserialized buffers to `TypedImperativeAggregate`.

### Why are the changes needed?

This is the mechanism we are going to use to implement observable metrics.

### Does this PR introduce any user-facing change?

No, not yet.

### How was this patch tested?

Added `AggregatingAccumulator` test suite.

Closes#26012 from hvanhovell/SPARK-29346.

Authored-by: herman <herman@databricks.com>

Signed-off-by: herman <herman@databricks.com>

### What changes were proposed in this pull request?

DataSourceV2 Exec classes (ShowTablesExec, ShowNamespacesExec, etc.) all extend LeafExecNode. This results in running a job when executeCollect() is called. This breaks the previous behavior [SPARK-19650](https://issues.apache.org/jira/browse/SPARK-19650).

A new command physical operator will be introduced form which all V2 Exec classes derive to avoid running a job.

### Why are the changes needed?

It is a bug since the current behavior runs a spark job, which breaks the existing behavior: [SPARK-19650](https://issues.apache.org/jira/browse/SPARK-19650).

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing unit tests.

Closes#26048 from imback82/dsv2_command.

Authored-by: Terry Kim <yuminkim@gmail.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Invocations like `sc.parallelize(Array((1,2)))` cause a compile error in 2.13, like:

```

[ERROR] [Error] /Users/seanowen/Documents/spark_2.13/core/src/test/scala/org/apache/spark/ShuffleSuite.scala:47: overloaded method value apply with alternatives:

(x: Unit,xs: Unit*)Array[Unit] <and>

(x: Double,xs: Double*)Array[Double] <and>

(x: Float,xs: Float*)Array[Float] <and>

(x: Long,xs: Long*)Array[Long] <and>

(x: Int,xs: Int*)Array[Int] <and>

(x: Char,xs: Char*)Array[Char] <and>

(x: Short,xs: Short*)Array[Short] <and>

(x: Byte,xs: Byte*)Array[Byte] <and>

(x: Boolean,xs: Boolean*)Array[Boolean]

cannot be applied to ((Int, Int), (Int, Int), (Int, Int), (Int, Int))

```

Using a `Seq` instead appears to resolve it, and is effectively equivalent.

### Why are the changes needed?

To better cross-build for 2.13.

### Does this PR introduce any user-facing change?

None.

### How was this patch tested?

Existing tests.

Closes#26062 from srowen/SPARK-29401.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Syntax like `'foo` is deprecated in Scala 2.13. Replace usages with `Symbol("foo")`

### Why are the changes needed?

Avoids ~50 deprecation warnings when attempting to build with 2.13.

### Does this PR introduce any user-facing change?

None, should be no functional change at all.

### How was this patch tested?

Existing tests.

Closes#26061 from srowen/SPARK-29392.

Authored-by: Sean Owen <sean.owen@databricks.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Reimplement `org.apache.spark.sql.catalyst.util.QuantileSummaries#merge` and add a test-case showing the previous bug.

### Why are the changes needed?

The original Greenwald-Khanna paper, from which the algorithm behind `approxQuantile` was taken, does not cover how to merge the result of multiple parallel QuantileSummaries. The current implementation violates some invariants and therefore the effective error can be larger than the specified.

### Does this PR introduce any user-facing change?

Yes, for same cases, the results from `approxQuantile` (`percentile_approx` in SQL) will now be within the expected error margin. For example:

```scala

var values = (1 to 100).toArray

val all_quantiles = values.indices.map(i => (i+1).toDouble / values.length).toArray

for (n <- 0 until 5) {

var df = spark.sparkContext.makeRDD(values).toDF("value").repartition(5)

val all_answers = df.stat.approxQuantile("value", all_quantiles, 0.1)

val all_answered_ranks = all_answers.map(ans => values.indexOf(ans)).toArray

val error = all_answered_ranks.zipWithIndex.map({ case (answer, expected) => Math.abs(expected - answer) }).toArray

val max_error = error.max

print(max_error + "\n")

}

```

In the current build it returns:

```

16

12

10

11

17

```

I couldn't run the code with this patch applied to double check the implementation. Can someone please confirm it now outputs at most `10`, please?

### How was this patch tested?

A new unit test was added to uncover the previous bug.

Closes#26029 from sitegui/SPARK-29336.

Authored-by: Guilherme <sitegui@sitegui.com.br>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Add back the resolved logical plan for UPDATE TABLE. It was in https://github.com/apache/spark/pull/25626 before but was removed later.

### Why are the changes needed?

In https://github.com/apache/spark/pull/25626 , we decided to not add the update API in DS v2, but we still want to implement UPDATE for builtin source like JDBC. We should at least add the resolved logical plan.

### Does this PR introduce any user-facing change?

no, UPDATE is still not supported yet.

### How was this patch tested?

new tests.

Closes#26025 from cloud-fan/update.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

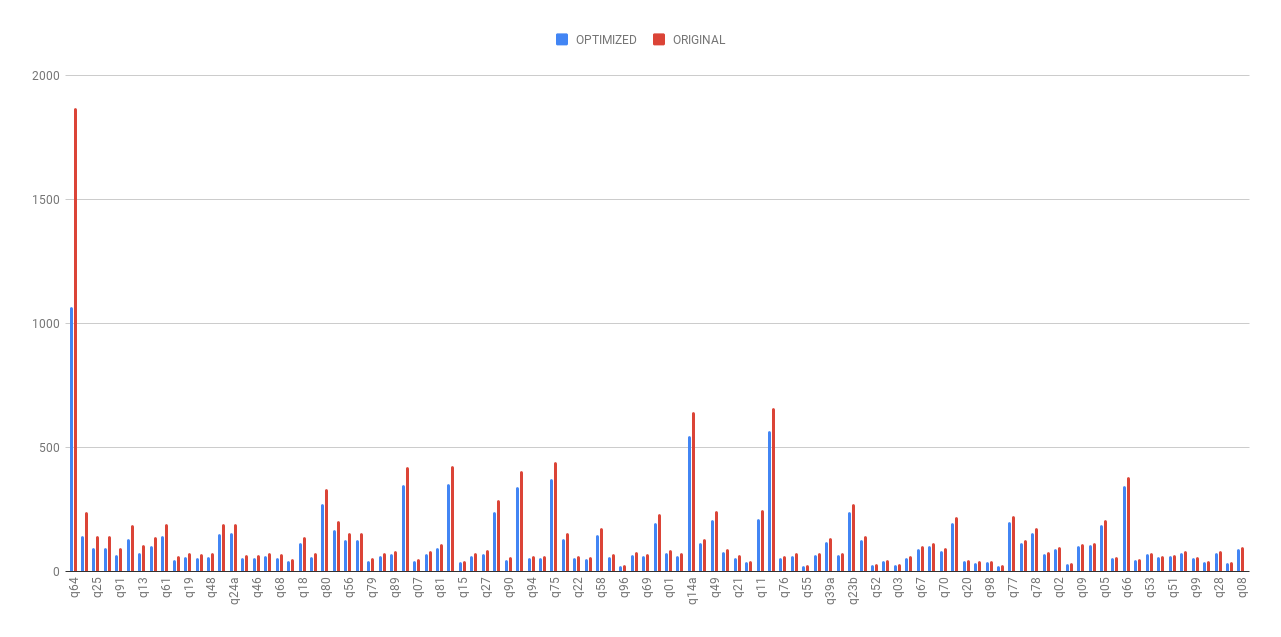

### What changes were proposed in this pull request?

This PR aims the followings.

- Refactor `TPCDSQueryBenchmark` to use main method to improve the usability.

- Reduce the number of iteration from 5 to 2 because it takes too long. (2 is okay because we have `Stdev` field now. If there is an irregular run, we can notice easily with that).

- Generate one result file for TPCDS scale factor 1. (Note that this test suite can be used for the other scale factors, too.)

- AWS EC2 `r3.xlarge` with `ami-06f2f779464715dc5 (ubuntu-bionic-18.04-amd64-server-20190722.1)` is used.

This PR adds a JDK8 result based on the TPCDS ScaleFactor 1G data generated by the following.

```

# `spark-tpcds-datagen` needs this. (JDK8)

$ git clone https://github.com/apache/spark.git -b branch-2.4 --depth 1 spark-2.4

$ export SPARK_HOME=$PWD

$ ./build/mvn clean package -DskipTests

# Generate data. (JDK8)

$ git clone gitgithub.com:maropu/spark-tpcds-datagen.git

$ cd spark-tpcds-datagen/

$ build/mvn clean package

$ mkdir -p /data/tpcds

$ ./bin/dsdgen --output-location /data/tpcds/s1 // This need `Spark 2.4`

```

### Why are the changes needed?

Although the generated TPCDS data is random, we can keep the record.

### Does this PR introduce any user-facing change?

No. (This is dev-only test benchmark).

### How was this patch tested?

Manually run the benchmark. Please note that you need to have TPCDS data.

```

SPARK_GENERATE_BENCHMARK_FILES=1 build/sbt "sql/test:runMain org.apache.spark.sql.execution.benchmark.TPCDSQueryBenchmark --data-location /data/tpcds/s1"

```

Closes#26049 from dongjoon-hyun/SPARK-25668.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

In our PROD env, we have a pure Spark cluster, I think this is also pretty common, where computation is separated from storage layer. In such deploy mode, data locality is never reachable.

And there are some configurations in Spark scheduler to reduce waiting time for data locality(e.g. "spark.locality.wait"). While, problem is that, in listing file phase, the location informations of all the files, with all the blocks inside each file, are all fetched from the distributed file system. Actually, in a PROD environment, a table can be so huge that even fetching all these location informations need take tens of seconds.

To improve such scenario, Spark need provide an option, where data locality can be totally ignored, all we need in the listing file phase are the files locations, without any block location informations.

### Why are the changes needed?

And we made a benchmark in our PROD env, after ignore the block locations, we got a pretty huge improvement.

Table Size | Total File Number | Total Block Number | List File Duration(With Block Location) | List File Duration(Without Block Location)

-- | -- | -- | -- | --

22.6T | 30000 | 120000 | 16.841s | 1.730s

28.8 T | 42001 | 148964 | 10.099s | 2.858s

3.4 T | 20000 | 20000 | 5.833s | 4.881s

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Via ut.

Closes#25869 from wangshisan/SPARK-29189.

Authored-by: gwang3 <gwang3@ebay.com>

Signed-off-by: Imran Rashid <irashid@cloudera.com>

### What changes were proposed in this pull request?

I introduced new constants `SECONDS_PER_MONTH` and `MILLIS_PER_MONTH`, and reused it in calculations of seconds/milliseconds per month. `SECONDS_PER_MONTH` is 2629746 because the average year of the Gregorian calendar is 365.2425 days long or 60 * 60 * 24 * 365.2425 = 31556952.0 = 12 * 2629746 seconds per year.

### Why are the changes needed?

Spark uses the proleptic Gregorian calendar (see https://issues.apache.org/jira/browse/SPARK-26651) in which the average year is 365.2425 days (see https://en.wikipedia.org/wiki/Gregorian_calendar) but existing implementation assumes 31 days per months or 12 * 31 = 372 days. That's far away from the the truth.

### Does this PR introduce any user-facing change?

Yes, the changes affect at least 3 methods in `GroupStateImpl`, `EventTimeWatermark` and `MonthsBetween`. For example, the `month_between()` function will return different result in some cases.

Before:

```sql

spark-sql> select months_between('2019-09-15', '1970-01-01');

596.4516129

```

After:

```sql

spark-sql> select months_between('2019-09-15', '1970-01-01');

596.45996838

```

### How was this patch tested?

By existing test suite `DateTimeUtilsSuite`, `DateFunctionsSuite` and `DateExpressionsSuite`.

Closes#25998 from MaxGekk/days-in-year.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Sean Owen <sean.owen@databricks.com>

### What changes were proposed in this pull request?

Added new rules to `TypeCoercion.DateTimeOperations` for the `Subtract` expression which is replaced by existing `TimestampDiff` expression if one of its parameter has the `DATE` type and another one is the `TIMESTAMP` type. The date argument is casted to timestamp.

### Why are the changes needed?

- To maintain feature parity with PostgreSQL which supports subtraction of a date from a timestamp and a timestamp from a date:

```sql

maxim=# select timestamp'now' - date'epoch';

?column?

----------------------------

18175 days 21:07:33.412875

(1 row)

maxim=# select date'2020-01-01' - timestamp'now';

?column?

-------------------------

86 days 02:52:00.945296

(1 row)

```

- To conform to the SQL standard which defines datetime subtraction as an interval.

### Does this PR introduce any user-facing change?

Yes, currently the queries bellow fails with the error:

```sql

spark-sql> select timestamp'now' - date'2019-10-01';

Error in query: cannot resolve '(TIMESTAMP('2019-10-06 21:05:07.234') - DATE '2019-10-01')' due to data type mismatch: differing types in '(TIMESTAMP('2019-10-06 21:05:07.234') - DATE '2019-10-01')' (timestamp and date).; line 1 pos 7;

'Project [unresolvedalias((1570385107234000 - 18170), None)]

+- OneRowRelation

```

after the changes:

```sql

spark-sql> select timestamp'now' - date'2019-10-01';

interval 5 days 21 hours 4 minutes 55 seconds 878 milliseconds

```

### How was this patch tested?

- Add new cases to the `rule for date/timestamp operations` test in `TypeCoercionSuite`

- by 2 new test in `datetime.sql`

Closes#26036 from MaxGekk/date-timestamp-subtract.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

Change to use AtomicLong instead of a var in the test.

### Why are the changes needed?

Fix flaky test.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Existing UT.

Closes#26020 from xuanyuanking/SPARK-25159.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Added new expression `TimestampDiff` for timestamp subtractions. It accepts 2 timestamp expressions and returns another one of the `CalendarIntervalType`. While creating an instance of `CalendarInterval`, it initializes only the microsecond field by difference of the given timestamps in microseconds, and set the `months` field to zero. Also I added an rule for conversion `Subtract` to `TimestampDiff`, and enabled already ported test queries in `postgreSQL/timestamp.sql`.

### Why are the changes needed?

To maintain feature parity with PostgreSQL which allows to get timestamp difference:

```sql

# select timestamp'today' - timestamp'yesterday';

?column?

----------

1 day

(1 row)

```

### Does this PR introduce any user-facing change?

Yes, previously users got the following error from timestamp subtraction:

```sql

spark-sql> select timestamp'today' - timestamp'yesterday';

Error in query: cannot resolve '(TIMESTAMP('2019-10-04 00:00:00') - TIMESTAMP('2019-10-03 00:00:00'))' due to data type mismatch: '(TIMESTAMP('2019-10-04 00:00:00') - TIMESTAMP('2019-10-03 00:00:00'))' requires (numeric or interval) type, not timestamp; line 1 pos 7;

'Project [unresolvedalias((1570136400000000 - 1570050000000000), None)]

+- OneRowRelation

```

after the changes they should get an interval:

```sql

spark-sql> select timestamp'today' - timestamp'yesterday';

interval 1 days

```

### How was this patch tested?

- Added tests for `TimestampDiff` to `DateExpressionsSuite`

- By new test in `TypeCoercionSuite`.

- Enabled tests in `postgreSQL/timestamp.sql`.

Closes#26022 from MaxGekk/timestamp-diff.

Authored-by: Maxim Gekk <max.gekk@gmail.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Currently we deal with different `ParsedStatement` in many places and write duplicated catalog/table lookup logic. In general the lookup logic is

1. try look up the catalog by name. If no such catalog, and default catalog is not set, convert `ParsedStatement` to v1 command like `ShowDatabasesCommand`. Otherwise, convert `ParsedStatement` to v2 command like `ShowNamespaces`.

2. try look up the table by name. If no such table, fail. If the table is a `V1Table`, convert `ParsedStatement` to v1 command like `CreateTable`. Otherwise, convert `ParsedStatement` to v2 command like `CreateV2Table`.

However, since the code is duplicated we don't apply this lookup logic consistently. For example, we forget to consider the v2 session catalog in several places.

This PR centralizes the catalog/table lookup logic by 3 rules.

1. `ResolveCatalogs` (in catalyst). This rule resolves v2 catalog from the multipart identifier in SQL statements, and convert the statement to v2 command if the resolved catalog is not session catalog. If the command needs to resolve the table (e.g. ALTER TABLE), put an `UnresolvedV2Table` in the command.

2. `ResolveTables` (in catalyst). It resolves `UnresolvedV2Table` to `DataSourceV2Relation`.

3. `ResolveSessionCatalog` (in sql/core). This rule is only effective if the resolved catalog is session catalog. For commands that don't need to resolve the table, this rule converts the statement to v1 command directly. Otherwise, it converts the statement to v1 command if the resolved table is v1 table, and convert to v2 command if the resolved table is v2 table. Hopefully we can remove this rule eventually when v1 fallback is not needed anymore.

### Why are the changes needed?

Reduce duplicated code and make the catalog/table lookup logic consistent.

### Does this PR introduce any user-facing change?

no

### How was this patch tested?

existing tests

Closes#25747 from cloud-fan/lookup.

Authored-by: Wenchen Fan <wenchen@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Add access modifier `protected` for `sparkConf` in SQLQueryTestSuite, because in the parent trait SharedSparkSession, it is protected.

### Why are the changes needed?

Code consistency.

### Does this PR introduce any user-facing change?

No

### How was this patch tested?

Existing UT.

Closes#26019 from xuanyuanking/SPARK-29203.

Authored-by: Yuanjian Li <xyliyuanjian@gmail.com>

Signed-off-by: HyukjinKwon <gurwls223@apache.org>

### What changes were proposed in this pull request?

1. With ANSI store assignment policy, an exception is thrown on casting failure

2. Introduce a new expression `AnsiCast` for the ANSI store assignment policy, so that the store assignment policy configuration won't affect the general `Cast`.

### Why are the changes needed?

As per ANSI SQL standard, ANSI store assignment policy should throw an exception on insertion failure, such as inserting out-of-range value to a numeric field.

### Does this PR introduce any user-facing change?

With ANSI store assignment policy, an exception is thrown on casting failure

### How was this patch tested?

Unit test

Closes#25997 from gengliangwang/newCast.

Authored-by: Gengliang Wang <gengliang.wang@databricks.com>

Signed-off-by: Wenchen Fan <wenchen@databricks.com>

### What changes were proposed in this pull request?

Dynamic partition pruning filters are added as an in-subquery containing a `BroadcastExchangeExec` in case of a broadcast hash join. This PR makes the `ReuseExchange` rule visit in-subquery nodes, to ensure the new `BroadcastExchangeExec` added by dynamic partition pruning can be reused.

### Why are the changes needed?

This initial dynamic partition pruning PR did not enable this reuse, which means a broadcast exchange would be executed twice, in the main query and in the DPP filter.

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

Added broadcast exchange reuse check in `DynamicPartitionPruningSuite`

Closes#26015 from maryannxue/exchange-reuse.

Authored-by: maryannxue <maryannxue@apache.org>

Signed-off-by: Xiao Li <gatorsmile@gmail.com>

### What changes were proposed in this pull request?

Add an overload for the higher order function `filter` that takes array index as its second argument to `org.apache.spark.sql.functions`.

### Why are the changes needed?

See: SPARK-28962 and SPARK-27297. Specifically ueshin pointing out the discrepency here: https://github.com/apache/spark/pull/24232#issuecomment-533288653

### Does this PR introduce any user-facing change?

### How was this patch tested?

Updated the these test suites:

`test.org.apache.spark.sql.JavaHigherOrderFunctionsSuite`

and

`org.apache.spark.sql.DataFrameFunctionsSuite`

Closes#26007 from nvander1/add_index_overload_for_filter.

Authored-by: Nik Vanderhoof <nikolasrvanderhoof@gmail.com>

Signed-off-by: Takuya UESHIN <ueshin@databricks.com>

### What changes were proposed in this pull request?

This PR renames `object JSONBenchmark` to `object JsonBenchmark` and the benchmark result file `JSONBenchmark-results.txt` to `JsonBenchmark-results.txt`.

### Why are the changes needed?

Since the file name doesn't match with `object JSONBenchmark`, it makes a confusion when we run the benchmark. In addition, this makes the automation difficult.

```

$ find . -name JsonBenchmark.scala

./sql/core/src/test/scala/org/apache/spark/sql/execution/datasources/json/JsonBenchmark.scala

```

```

$ build/sbt "sql/test:runMain org.apache.spark.sql.execution.datasources.json.JsonBenchmark"

[info] Running org.apache.spark.sql.execution.datasources.json.JsonBenchmark

[error] Error: Could not find or load main class org.apache.spark.sql.execution.datasources.json.JsonBenchmark

```

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

This is just renaming.

Closes#26008 from dongjoon-hyun/SPARK-RENAME-JSON.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

This PR regenerates the `sql/core` benchmarks in JDK8/11 to compare the result. In general, we compare the ratio instead of the time. However, in this PR, the average time is compared. This PR should be considered as a rough comparison.

**A. EXPECTED CASES(JDK11 is faster in general)**

- [x] BloomFilterBenchmark (JDK11 is faster except one case)

- [x] BuiltInDataSourceWriteBenchmark (JDK11 is faster at CSV/ORC)

- [x] CSVBenchmark (JDK11 is faster except five cases)

- [x] ColumnarBatchBenchmark (JDK11 is faster at `boolean`/`string` and some cases in `int`/`array`)

- [x] DatasetBenchmark (JDK11 is faster with `string`, but is slower for `long` type)

- [x] ExternalAppendOnlyUnsafeRowArrayBenchmark (JDK11 is faster except two cases)

- [x] ExtractBenchmark (JDK11 is faster except HOUR/MINUTE/SECOND/MILLISECONDS/MICROSECONDS)

- [x] HashedRelationMetricsBenchmark (JDK11 is faster)

- [x] JSONBenchmark (JDK11 is much faster except eight cases)

- [x] JoinBenchmark (JDK11 is faster except five cases)

- [x] OrcNestedSchemaPruningBenchmark (JDK11 is faster in nine cases)

- [x] PrimitiveArrayBenchmark (JDK11 is faster)

- [x] SortBenchmark (JDK11 is faster except `Arrays.sort` case)

- [x] UDFBenchmark (N/A, values are too small)

- [x] UnsafeArrayDataBenchmark (JDK11 is faster except one case)

- [x] WideTableBenchmark (JDK11 is faster except two cases)

**B. CASES WE NEED TO INVESTIGATE MORE LATER**

- [x] AggregateBenchmark (JDK11 is slower in general)

- [x] CompressionSchemeBenchmark (JDK11 is slower in general except `string`)

- [x] DataSourceReadBenchmark (JDK11 is slower in general)

- [x] DateTimeBenchmark (JDK11 is slightly slower in general except `parsing`)

- [x] MakeDateTimeBenchmark (JDK11 is slower except two cases)

- [x] MiscBenchmark (JDK11 is slower except ten cases)

- [x] OrcV2NestedSchemaPruningBenchmark (JDK11 is slower)

- [x] ParquetNestedSchemaPruningBenchmark (JDK11 is slower except six cases)

- [x] RangeBenchmark (JDK11 is slower except one case)

`FilterPushdownBenchmark/InExpressionBenchmark/WideSchemaBenchmark` will be compared later because it took long timer.

### Why are the changes needed?

According to the result, there are some difference between JDK8/JDK11.

This will be a baseline for the future improvement and comparison. Also, as a reproducible environment, the following environment is used.

- Instance: `r3.xlarge`

- OS: `CentOS Linux release 7.5.1804 (Core)`

- JDK:

- `OpenJDK Runtime Environment (build 1.8.0_222-b10)`

- `OpenJDK Runtime Environment 18.9 (build 11.0.4+11-LTS)`

### Does this PR introduce any user-facing change?

No.

### How was this patch tested?

This is a test-only PR. We need to run benchmark.

Closes#26003 from dongjoon-hyun/SPARK-29320.

Authored-by: Dongjoon Hyun <dhyun@apple.com>

Signed-off-by: Dongjoon Hyun <dhyun@apple.com>

### What changes were proposed in this pull request?

Scala 2.13 removes the parallel collections classes to a separate library, so first, this establishes a `scala-2.13` profile to bring it back, for future use.

However the library enables use of `.par` implicit conversions via a new class that is not in 2.12, which makes cross-building hard. This implements a suggested workaround from https://github.com/scala/scala-parallel-collections/issues/22 to avoid `.par` entirely.

### Why are the changes needed?

To compile for 2.13 and later to work with 2.13.

### Does this PR introduce any user-facing change?

Should not, no.

### How was this patch tested?

Existing tests.

Closes#25980 from srowen/SPARK-29296.